What's Missing From LLM Chatbots: A Sense of Purpose

Sources: https://thegradient.pub/dialog, https://thegradient.pub/dialog/, The Gradient

Overview

Purposeful dialogue treats LLM chatbots as collaborative agents pursuing goals across multiple exchanges rather than single-turn text predictors. A future of human–AI collaboration emphasizes dialog that iteratively refines information, negotiates preferences, and updates the world model of both parties. The idea spans simple tasks—like travel planning—to more complex engineering work such as code generation, where back-and-forth communication helps clarify requirements, gather missing data, and reduce defects. Historically, dialogue systems evolved from scripted interactions (e.g., Schank’s restaurant script) and early chatbots (ELIZA, PARRY) to modern LLMs, where the dialogue history must be formatted for models that process strings rather than structured conversations. The article outlines three core stages: 1) Pretraining: a sequence model is trained to predict the next token on large, mixed-text corpora. 2) Introduce dialogue formatting: the history is represented with prompts such as system prompts and past exchanges (e.g., blocks). 3) RLHF: fine-tuning with rewards or penalties helps align outputs with desired behavior. The authors note that current systems rely heavily on system prompts for behavior control, yet over several rounds, models can drift from these prompts and even become more vulnerable to jailbreaking or hallucinations. They also discuss empirical findings showing that long-context models can be distracted in dialogue contexts, motivating techniques like split-softmax to mitigate such effects. In short, meaningful dialogue can enable long-term, goal-directed collaboration, but it also poses new research and safety challenges that are not fully captured by one-shot benchmarks.

Key features

- Multi-turn, goal-driven dialogue with turn-taking across a sequence of exchanges

- Memory and user-profile updates to adapt to preferences over time

- System prompts and dialogue formatting to steer behavior and safety

- RLHF as a fine-tuning step that complements pretraining and formatting

- Ability to read external information sources (e.g., Twitter, arXiv, Slack, NYT) and summarize or draft accordingly

- Collaboration with humans akin to pair programming, reducing defects through back-and-forth clarification

- Potential for long-term personalization, such as drafting emails and learning from edits

- Awareness of limitations: brittleness of instruction following, safety concerns, and drift over rounds

- Context-length considerations: longer contexts don’t always translate to better adherence in dialogue; techniques like split-softmax are proposed to mitigate this

Common use cases

- Travel planning or other goal-oriented tasks that benefit from iterative clarification

- Personal assistants that build user models over time (e.g., morning news summaries tailored to preferences)

- Code generation and software engineering tasks requiring back-and-forth with engineers to refine requirements and data

- Psycho-therapist or customer-service style roles, with the caveat of safety and role constraints

- Personal knowledge work, such as reading resources (Twitter, arXiv, Slack, NYT) and producing summaries or drafts

- Drafting emails or documents, improving through user edits

Setup & installation

No explicit setup or installation commands are provided in the source article.

Quick start

# Minimal runnable example (conceptual)

# Demonstrates a turn-based dialogue structure with a system prompt.

def respond(prompt, history, system_prompt):

# Placeholder for LLM inference in an interactive dialogue

return f"[LLM response to '{prompt}' with system '{system_prompt}']"

def run():

system_prompt = "harmless and helpful"

history = []

turns = [

{"role": "user", "text": "I want to plan a trip."},

{"role": "user", "text": "Focus on Europe, 5 days, moderate budget."}

]

for t in turns:

assistant = respond(t["text"], history, system_prompt)

history.append(("user", t["text"]))

history.append(("assistant", assistant))

print(assistant)

if __name__ == "__main__":

run()Pros and cons

- Pros

- Enables goal-directed, multi-turn collaboration between humans and AI

- Allows selective information exchange across rounds, improving efficiency

- Facilitates memory and personalization, adapting to user preferences over time

- Supports back-and-forth workflow improvements in domains like coding

- Cons

- Models can drift from system prompts across rounds, raising safety concerns

- Jailbreaking and hallucinations can increase when prompts become stale

- Longer dialogues may still face limitations despite larger context windows

- Implementing robust turn-taking and memory requires careful engineering

Alternatives (brief comparisons)

| Approach | Description | Strengths | Limitations |---|---|---|---| | One-shot instruction following | Single-turn prompts guiding the model for a task | Simple, fast, low latency | May fail to capture evolving goals; limited context |Purposeful dialogue (multi-turn) | Iterative exchanges to achieve a goal | Better information exchange; memory and personalization potential | More complex to implement; safety and stability challenges |RLHF-tuned interactive systems | Fine-tuned with human feedback for interactive tasks | Often better-aligned and robust across tasks | Data requirements; risk of overfitting to feedback loops |

Pricing or License

Not specified in the source article.

References

More resources

CUDA Toolkit 13.0 for Jetson Thor: Unified Arm Ecosystem and More

Unified CUDA toolkit for Arm on Jetson Thor with full memory coherence, multi-process GPU sharing, OpenRM/dmabuf interoperability, NUMA support, and better tooling across embedded and server-class targets.

Cut Model Deployment Costs While Keeping Performance With GPU Memory Swap

Leverage GPU memory swap (model hot-swapping) to share GPUs across multiple LLMs, reduce idle GPU costs, and improve autoscaling while meeting SLAs.

Fine-Tuning gpt-oss for Accuracy and Performance with Quantization Aware Training

Guide to fine-tuning gpt-oss with SFT + QAT to recover FP4 accuracy while preserving efficiency, including upcasting to BF16, MXFP4, NVFP4, and deployment with TensorRT-LLM.

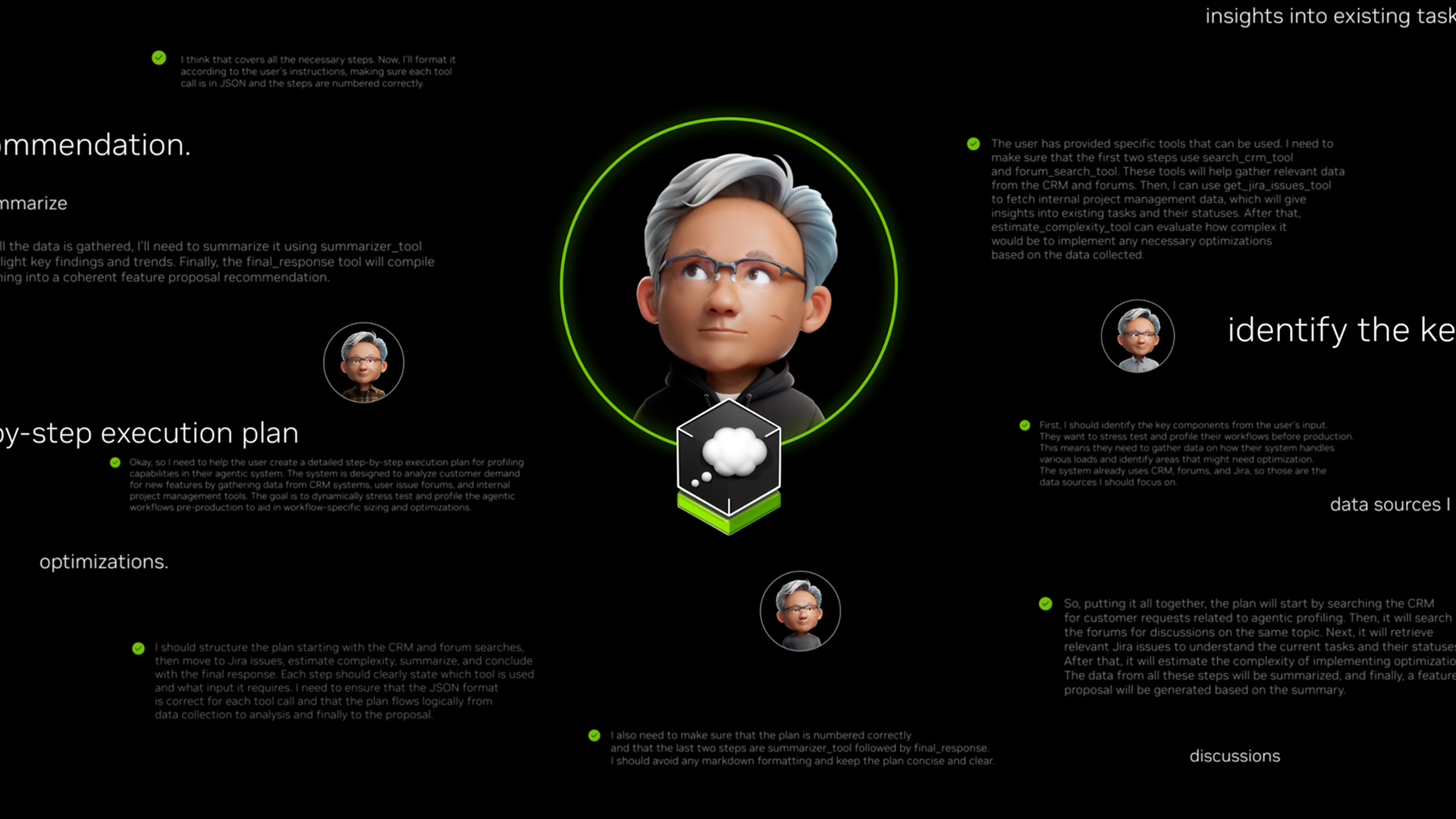

How Small Language Models Are Key to Scalable Agentic AI

Explores how small language models enable cost-effective, flexible agentic AI alongside LLMs, with NVIDIA NeMo and Nemotron Nano 2.

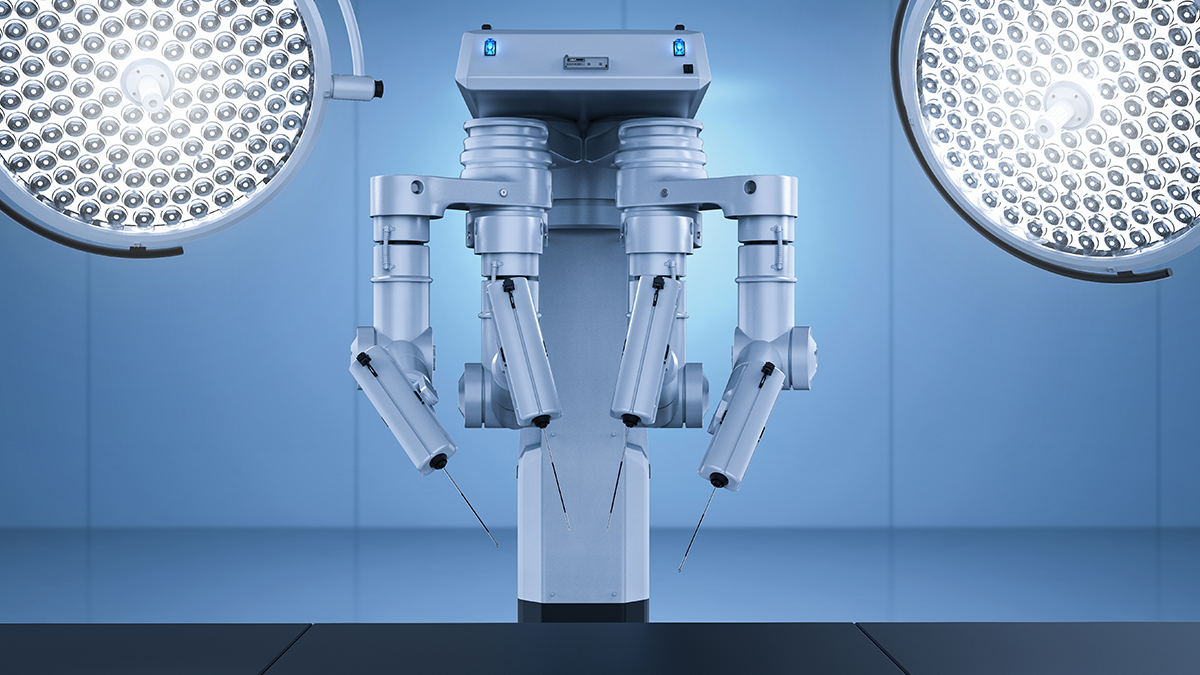

Getting Started with NVIDIA Isaac for Healthcare Using the Telesurgery Workflow

A production-ready, modular telesurgery workflow from NVIDIA Isaac for Healthcare unifies simulation and clinical deployment across a low-latency, three-computer architecture. It covers video/sensor streaming, robot control, haptics, and simulation to support training and remote procedures.

How to Scale Your LangGraph Agents in Production From a Single User to 1,000 Coworkers

Guidance on deploying and scaling LangGraph-based agents in production using the NeMo Agent Toolkit, load testing, and phased rollout for hundreds to thousands of users.